Statement on Tripos transparency

Tripos examinations in CUED are designed to place candidates in the correct order of merit, based on an equitable procedure that gives the best information about relative abilities, and to set the boundaries for the degree classification. In addition the examinations determine whether a student has reached the level required to progress to the next year of the Tripos. The systems in place for examination papers are not designed to provide detailed feedback and are incompatible with this. The examination processes are highly regulated and subject to rigorous quality control, with internal and external scrutiny. Procedures to be followed for each examination are on the web on the Teaching Office index page: see Faculty Board Guidelines for Examiners under ‘Information for all undergraduates’.

Mark guidelines (% or number of marks available) are provided for every question on every paper. Markers are instructed to watch how the average marks on each question are shaping up as the first few tens of scripts are marked. If an imbalance is detected, they are advised to make adjustments to the details of marking, and go back and remark the scripts accordingly. The aim is to achieve the target mark distribution without having to resort to scaling. Bearing in mind that the main task of examiners is to place candidates into the correct relative order, it becomes apparent that it is scarcely feasible to have an individual paper remarked by a different marker: this marker would in fact have to remark a substantial number of the scripts in order to guarantee consistency and fairness.

In addition to the rigorous internal quality control, the entire process is scrutinised by the External Examiners, experienced academics from other universities. They are given time and opportunity to inspect whatever examination scripts and coursework they wish. They are told the tentative positions of class boundaries, so they can concentrate on looking at performance of candidates close to boundaries. They then attend the final meeting of Examiners, and contribute their views on the fairness of the process, and on the appropriateness of the allocated class boundaries.

The examinations process is very time-consuming and resource intensive. Changing the procedures to enable detailed feedback to students would mean compromising on other aspects, which would impact on delivery of the list of candidates in accurate rank order. Remember that full feedback is, however, provided on the coursework which constitutes 28% of IIA and at least 40% of IIB.

It is the established policy of the Faculty Board of Engineering that proportions of candidates placed in the different Tripos classes should remain approximately fixed, as detailed in the Guidelines to Examiners for each examination. This is because our number of students per year is quite large, so the statistics of large numbers make the overall student performance far more consistent than any individual examiner can ever be when setting and marking questions.

Aggregate marks are obtained by adding the marks from separate papers. It is essential for fairness that the mark distributions on the separate papers should be reasonably consistent in order for simple addition to be statistically justifiable. In Part I, where all students take essentially the same papers, it is enough to ask examiners to aim for the same mean mark. In Part IIA matters are very different. Many combinations of papers are taken, and if examiners were simply instructed to achieve an average mark that was the same, injustice would be done to some students. It is a matter of measurable fact that the cohorts of students taking different modules can differ very significantly in their ability as revealed by examination in previous years.

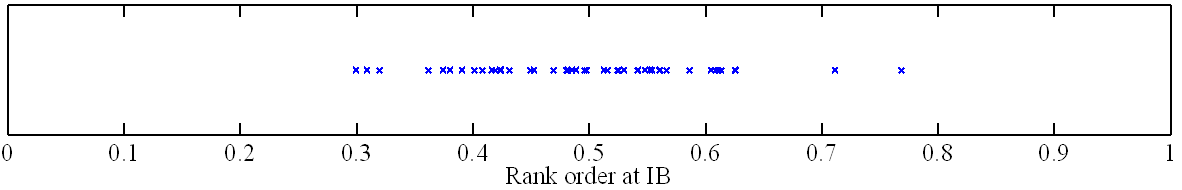

Scaling of marks in Parts IIA and IIB was introduced in response to criticisms from external examiners that students taking modules with a high-achieving entry cohort were being unfairly disadvantaged. In consequence a scheme was developed to try to achieve a more level playing field. We do not of course claim that our scheme is perfect, but we can produce data to demonstrate that it is a great deal more fair than the previous practice with no individual scaling. The schemes are different in detail for IIA and IIB, but the principle is the same. For the entry cohort of each module, a single number is derived from their performance in the previous year’s examination representing a mean value of student rank order. The plot below shows these mean values for IIA modules in a typical year: you will note that the range is extraordinarily high. This mean is used to give statistically justified guidelines to the examiner of each individual module, to specify target levels that correspond to a universal underlying statistical distribution of students, interpreted to fit the particular average value for each cohort.

Mean 1B rank order values of students entering different IIA modules

Last updated on 08/10/2019 12:34